This post’s subject are convolutional neural networks. Are multilayer networks which can identify objects, patterns and people.

When click in the button below, will open a post about how conventional neural networks work.

Introduction to neural networksClick here

Limits of conventional neural networks

A convolutional neural network would need a too high number of inputs and parameters to analyze small patterns, requiring too much processing power from computers. A conventional neural network can become specialist in the data which were trained and when see new data can lose performance.

Convolution operation

The images are seen as a 3 dimensions matrix: height and width determine the image`s size and depth indicates RGB color channels. Which are the three primary colors: red, green and blue.

The neural network makes a matriz convolution operation. A smaller matriz serves as a filter, also called Kernel. The filter reads all pixels and produce a matrix with dimensions smaller than the input matrix.

[WPGP gif_id=”10612″ width=”600″]

In the example above, the filter is yellow matrix and the green one is the input.

The first pixel’s value of convoluted matrix is calculated in this way:

(1\cdot 1)+(1\cdot 0)+(1\cdot 1)+(0\cdot 0)+(1\cdot 1)+(1\cdot 0)+(0\cdot 1)+(0\cdot 0)+(1\cdot 1)=4

The value of next number from the first line is calculated in the same way. The same is done to the same elements from input matrix.

(1\cdot 1)+(1\cdot 0)+(0\cdot 1)+(1\cdot 0)+(1\cdot 1)+(1\cdot 0)+(0\cdot 1)+(1\cdot 0)+(1\cdot 1)=3

These filters are used to blur, detect borders, reliefs, colors, sharpness and manipulate the image.

Non-linearity

After the convolution, an activation function is used to make a non-linear operation. This is necessary because the real world is non-linear and the convolution neural network must identify non-linear patterns. The most efficient function is the ReLU.

f(x)=max(0,x)

This function replace the negative values of pixels by zero and avoid saturation during the training.

An image before and after the ReLU.

Pooling

Is the reduction of matrix size by making a sampling, keeping the most important features. The main types are: maximum value, sum and average. Below is a maximum value pooling.

How a image becomes after the pooling process.

This process’s function is to simplify and reduce the information to network’s neurons and reduce the number of parameters.

Fully connected layer

The final layer is fully connected, it is where stays the perceptron neural network of many layers. Receives the processed inputs to make the classifications. Has this name because each neuron from previous layer is connected to all neurons from the following layer.

Architecture

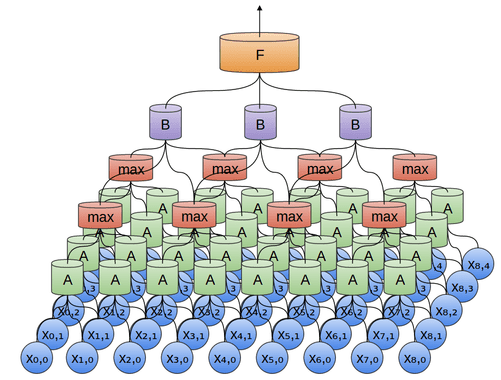

In a convolutional neural network architecture, the layer positions are in this order: Input, convolution, nonlinearity, pooling and fully connected neural network.

Some convolutional neural networks has 2 or more stages of convolution, ReLU and pooling.

Some applications

The convolutional neural networks can identify traffic signs and will be used in driverless vehicles.

It is used in machine vision, as shown in the post about this subject.

The convolutional neural networks can be used in voice recognition, the audio data are represented in a spectrogram.